Change Data Capture

Acquire, Compare, Report, Refresh, Retain

Challenges

Operational data undergoes constant changes, additions, and deletions relative to historical data. Data warehouse maintenance and business groups need to compare tables and files, as well as capture, isolate, and analyze changes in data.

- complex or cumbersome

- prone to error and overload

- limited to a single database

- reliant on log data, and cannot readily use changed data in meaningful reports

Also, as data volumes grow, the regular reading and updating of the entire database is an impractical alternative for refreshing the warehouse. In other words, the feasibility of doing in-database work for deltas shrinks as table sizes grow.

Solutions

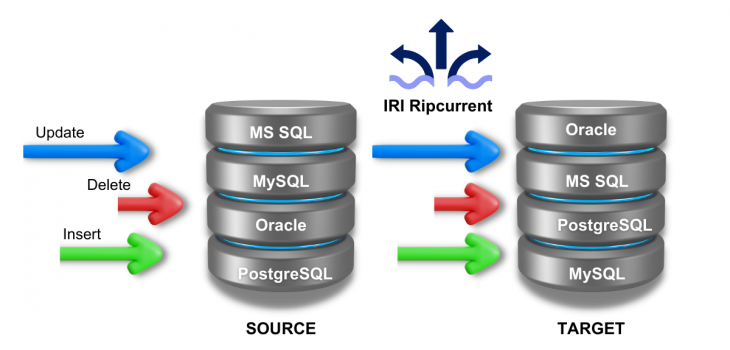

Capture and referesh MS SQL, MySQL, Oracle, and PostgreSQL targets in real-time when new rows are inserted, changed or deleted using IRI Ripcurrent, which monitors those logs for changes to the source data:

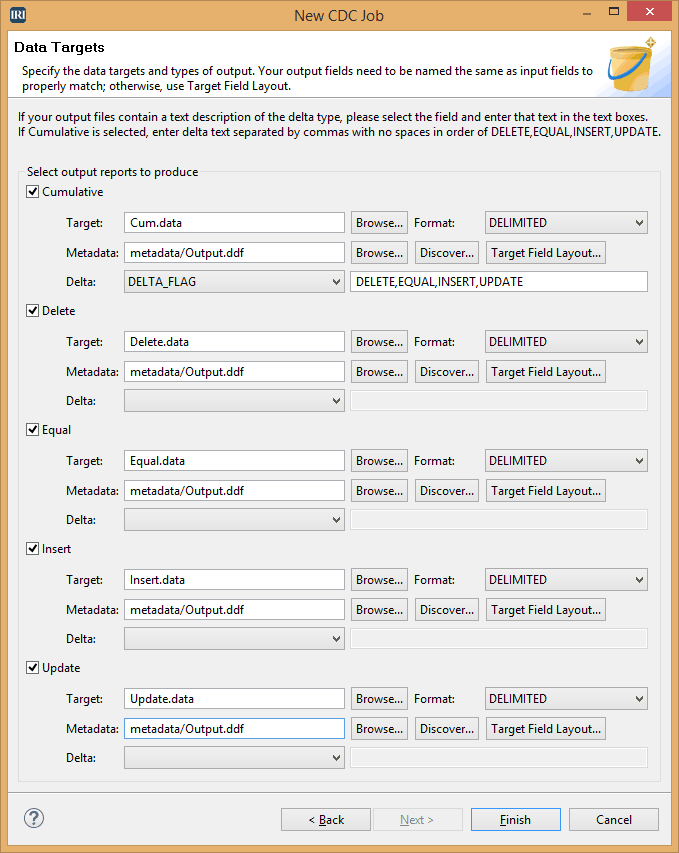

OR, report on the deltas (changes) off-line, in faster, portable, and more functional Sort Control Language (SortCL) jobs in IRI Voracity or IRI CoSort. SortCL's change data capture (CDC) approach is data-centric, rather than log-based, which:

- enables multiple source change analyses (not just from one or a few RDBs, but all of them, plus flat files)

- supports segmentation of inserts, deletes, and updates

- can be cumulative or incremental (refresh CDC)

- allows meaningful BI (report) generation against the update values

- precludes log sniffers, DB-specific triggers, or other complex designs

Compare huge table, file, and other connected sources with consolidated sort, join, and condition logic that identifies the deltas, and simultaneously:

- transform the data (cleanse, calculate, aggregate, etc.)

- convert data types, field positions, and target formats

- protect with field-level encryption, data masking, etc.

- report in custom detail and summary layouts

- refresh data warehouse tables with real-time updates

- bulk load pre-sorted data through DB load utilities

- output to flat files for archive, replication or hand-offs

Regardless of the target(s), this approach removes a major workload from the DBMS (which relies on triggers to update CDC tables). It also enables simultaneous business intelligence from the change details.

In a big data change capture scenario, you might want to start with a SELECT query in the compatible IRI FACT (Fast Extract) tool for Oracle, DB2, etc., to offload those rows generated after a certain timestamp to a flat file. Unqualified unloads are also very fast using FACT, and allow the entire transaction sets to be analyzed in the subsequent step.

At the same time, you can count the number of new, modified, or missing records in your reports, or consult SortCL's runtime statistics (which count inner and outer matches at each join). Label, log, and analyze changes in the transaction data to spot red flags and assess trends forensically.